Designing AI-Powered Cybersecurity Systems: A Smarter Approach to Threat Detection

Overview

Role

Contributions

Company

This project explores how AI can be used to improve decision-making in cybersecurity by supporting Security Operations Center (SOC) analysts throughout the threat response process. Through research and collaboration with analysts, key challenges such as alert overload, lack of transparency, and fragmented workflows were identified. In response, the solution focuses on an AI-powered interface that prioritises alerts, provides clear explanations, and enables fast, informed actions within a single, unified system.

Senior UX Designer

- Shaping strategy

- User research and testing

- End to end design

Rapid7

01. Project introduction

As cyber threats grow in complexity and scale, traditional rule-based security systems are no longer sufficient. Security teams face overwhelming volumes of alerts, many of which are false positives, leading to missed critical threats.

This case study explores how artificial intelligence can enhance cybersecurity systems by improving threat detection, automating responses, and reducing cognitive load for security analysts.

02. The Problem

Security Operations Centers (SOCs) struggle with:

- High volumes of alerts (alert fatigue)

- Slow threat detection and response times

- Difficulty identifying unknown (zero-day) attacks

- Lack of clarity in decision-making tools

How might we design an AI-powered system that helps security analysts detect, understand, and respond to threats more efficiently?

03. Discovery and Research

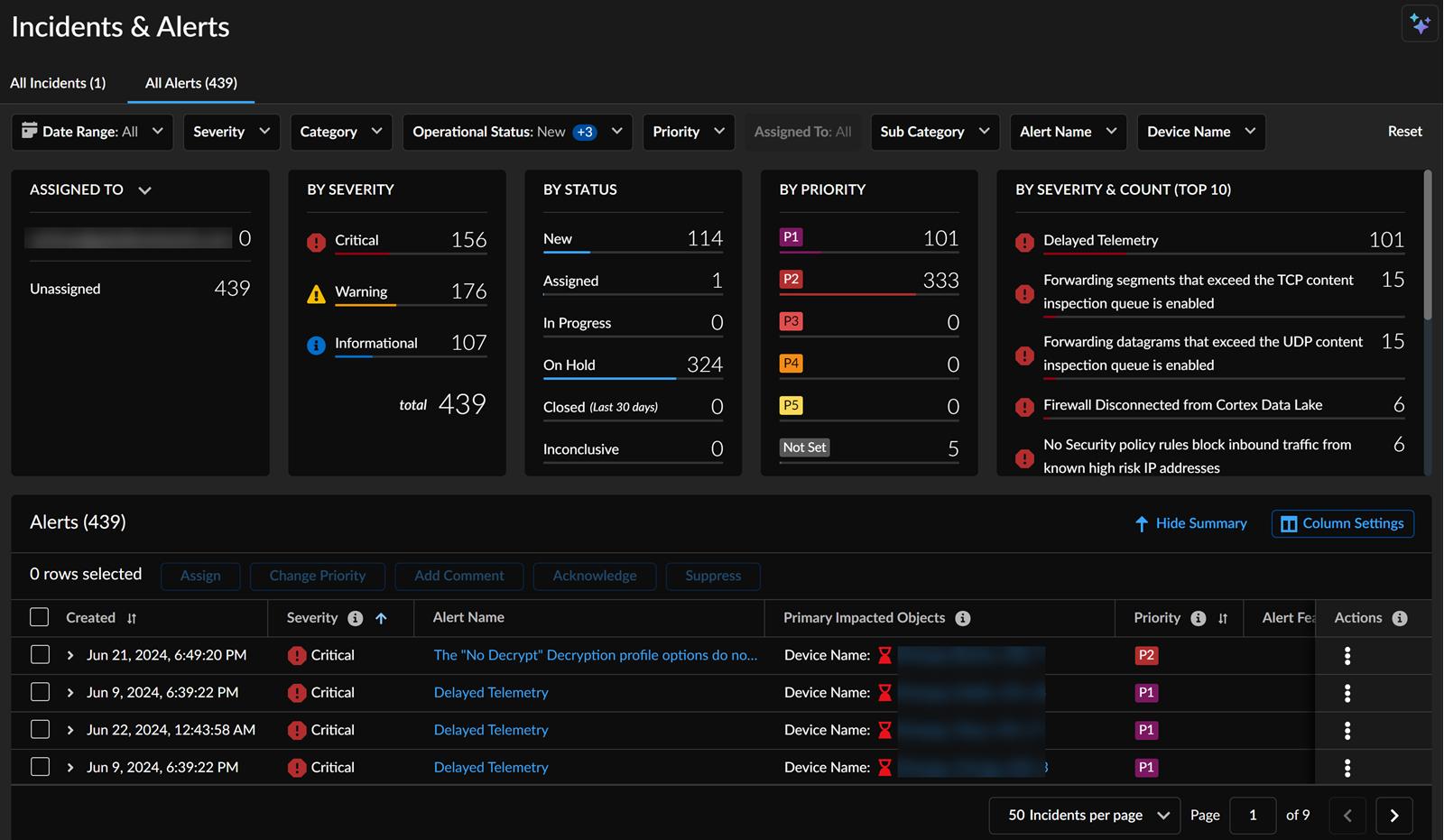

Market landscape analysis

To understand how AI is currently used in cybersecurity, I conducted a review of leading industry platforms and emerging trends.

Platforms Reviewed:

- Darktrace

- CrowdStrike

- Palo Alto Networks

- Microsoft Defender

Key Observations:

- Most platforms already integrate AI for threat detection and automation

- Interfaces are often data-heavy and complex, requiring expert knowledge

- Limited transparency in AI decisions (black-box problem)

- Alert systems tend to generate high volumes of notifications, contributing to fatigue

Strengths Across the Market:

- Real-time monitoring and detection

- Strong backend AI capabilities

- Automated response features

Gaps & Opportunities:

- Poor user experience for analysts under pressure

- Lack of clear, human-readable explanations

- Limited support for decision-making workflows

Key insight:

AI in cybersecurity is not failing at detection, it’s failing at decision support. Analysts don’t struggle to find threats; they struggle to confidently act on them.

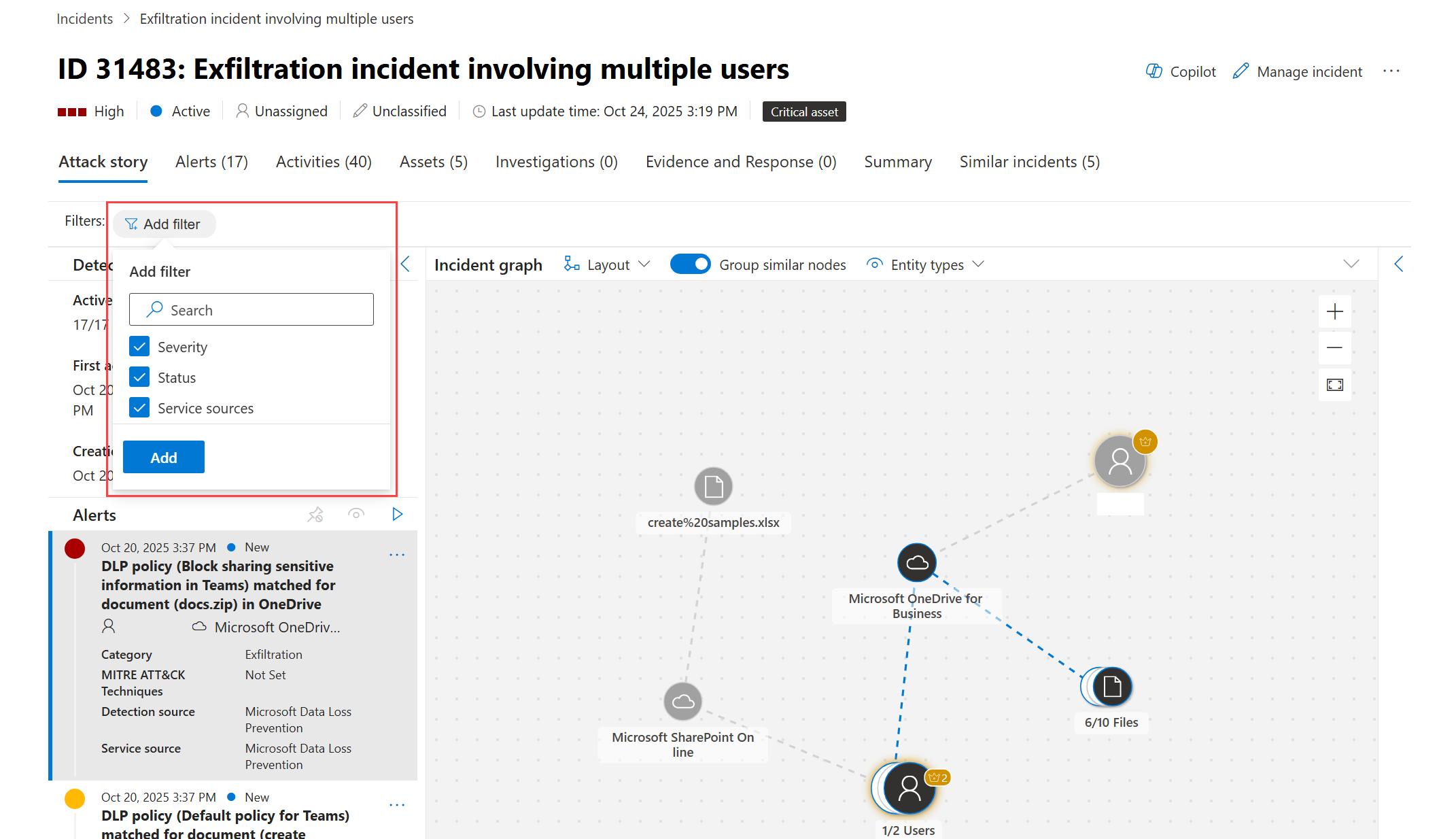

Palo Alto Networks, Microsoft Defender and Darktrace platforms.

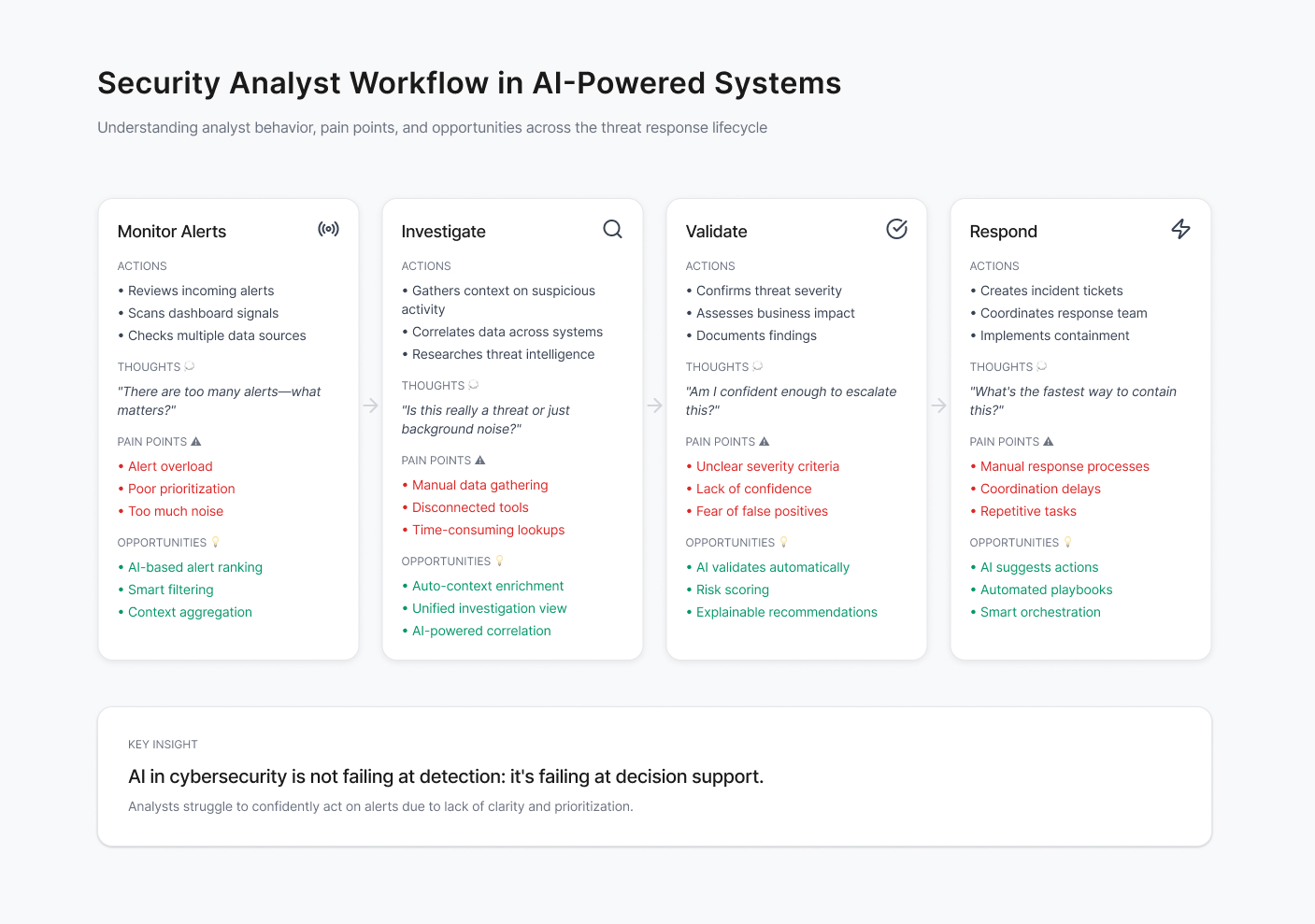

Journey Mapping

1. Signal vs Noise Problem

- Alerts are abundant but not prioritised effectively

- Analysts develop habits of ignoring alerts

Result: Important threats risk being missed

2. Cognitive Overload

- Too much raw data, not enough structured insight

- Analysts must interpret everything manually

Result: Mental fatigue + slower decisions

3. Lack of Explainability

- AI flags issues but doesn’t justify them clearly

- Analysts don’t trust or rely on automation

Result: Underutilised AI systems

4. Fragmented Tooling

- Multiple systems → multiple interfaces

- No unified workflow

Result: Time lost in navigation, not analysis

5. Slow Actionability

- Even after identifying a threat, response takes time

- Manual execution introduces delay

Result: Increased impact of attacks

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript

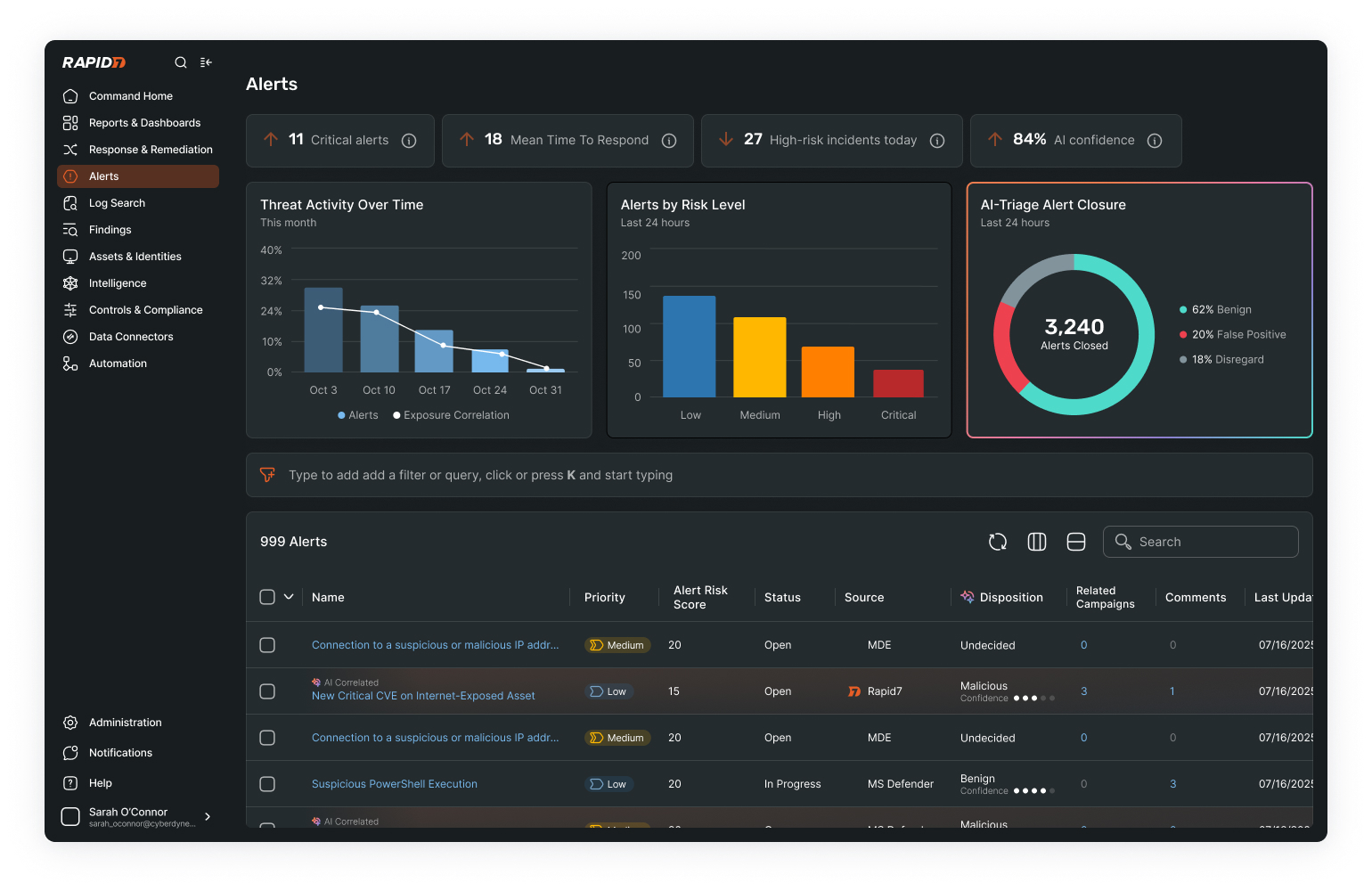

Examples of two siloed products with managing assets as part of their product offering

Workshop: Co-Designing with SOC Analysts

Objective

To validate initial assumptions and uncover real-world challenges, we conducted a collaborative workshop with 15 Security Operations Center (SOC) analysts from a range of experience levels.

The goal was to move beyond desk research and understand:

- How analysts actually work under pressure

- Where current tools fail them

- How AI could meaningfully support their workflow

Participants mapped their end-to-end threat response process, revealing consistent stages but a strong reliance on personal judgement rather than system guidance. Through collaborative discussions, key frustrations emerged—particularly alert overload, lack of context, fragmented tools, and low trust in AI-driven decisions.

A focused conversation on AI highlighted that analysts are not opposed to automation, but to systems that lack transparency. In response, participants co-designed ideal solutions, emphasising the need for explainable alerts, confidence scoring, unified data views, and faster, more intuitive response actions. This session reinforced that the core opportunity lies not in improving detection alone, but in supporting clearer, faster decision-making.

Key Insights from the Workshop:

1. Decision-making is the real bottleneck

Analysts don’t struggle to detect threats, they struggle to decide what to do next.

2. Trust is built through transparency

AI systems are often ignored when they behave like a “black box.”

3. Context is more valuable than volume

More data doesn’t help, better-structured insight does.

4. Speed is critical, but clarity comes first

Analysts prefer slightly slower systems if they are more understandable and reliable.

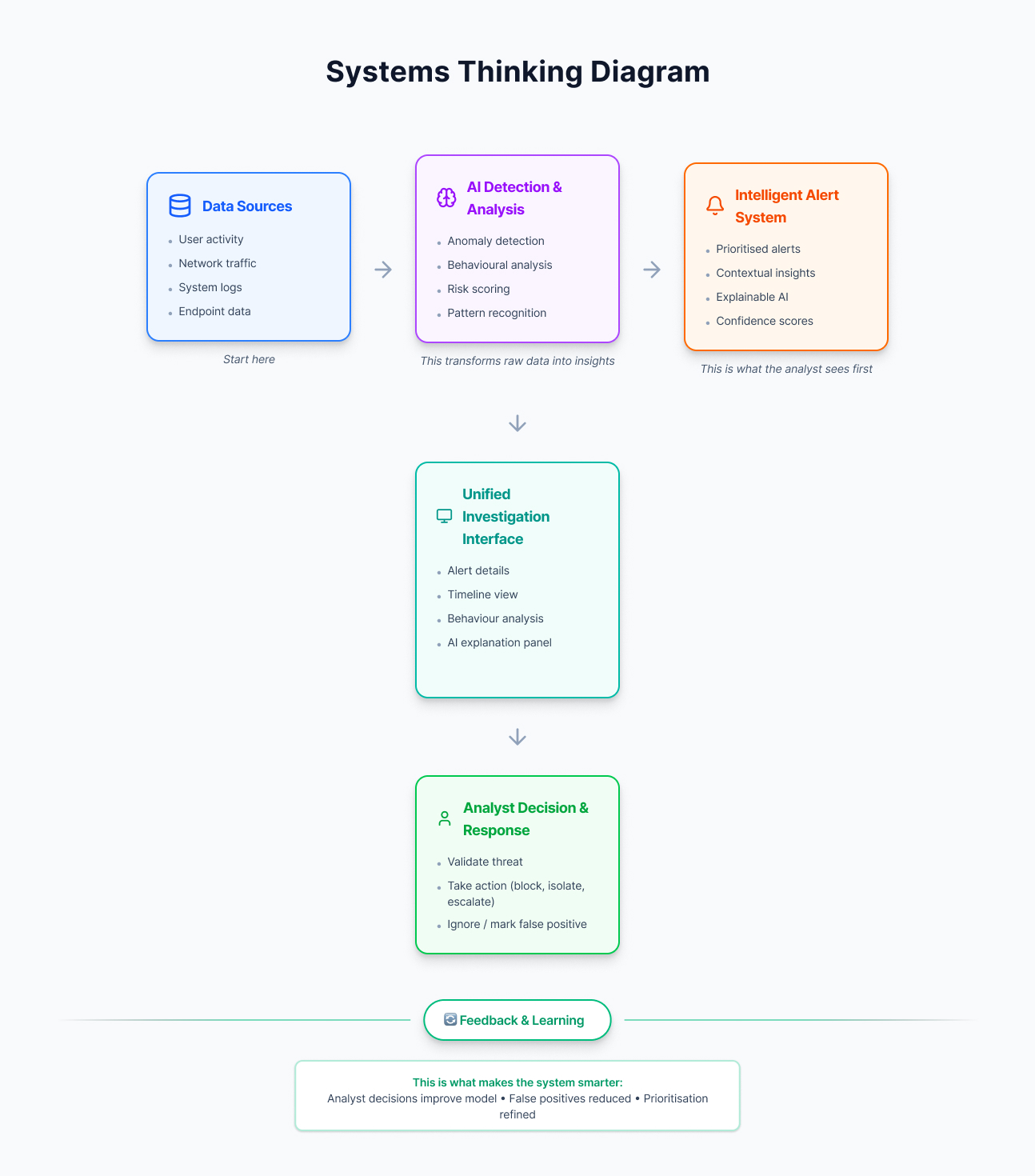

Systems Thinking Diagram

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript

04. Conceptualisation

The concept is guided by a set of core design principles aimed at improving how analysts interact with AI-driven systems. Information should be prioritised and simplified to reduce cognitive load, allowing analysts to quickly understand what matters and why. AI decisions must be transparent, providing clear explanations and supporting evidence to build trust. Insights should be directly actionable, enabling faster transitions from detection to response. All relevant data and tools should be brought into a single, unified interface to minimise context switching, while maintaining a human-in-the-loop approach where automation supports, rather than replaces, analyst decision-making in high-risk scenarios. Most interfaces are often dense and require expert interpretation. Visualisations such as anomaly graphs and threat timelines provide deep insight but can increase cognitive load under pressure.

The solution is centred around four key components:

Intelligent Alert System

A prioritised alert feed that highlights high-risk threats and reduces noise.

AI Summaries

A dedicated space showing why an alert was triggered, including behavioural patterns and evidence.

Unified Threat View

A consolidated interface combining logs, user activity, and historical data in one place.

Rapid Response Actions

Predefined, easy-to-execute actions such as blocking IPs or isolating devices.

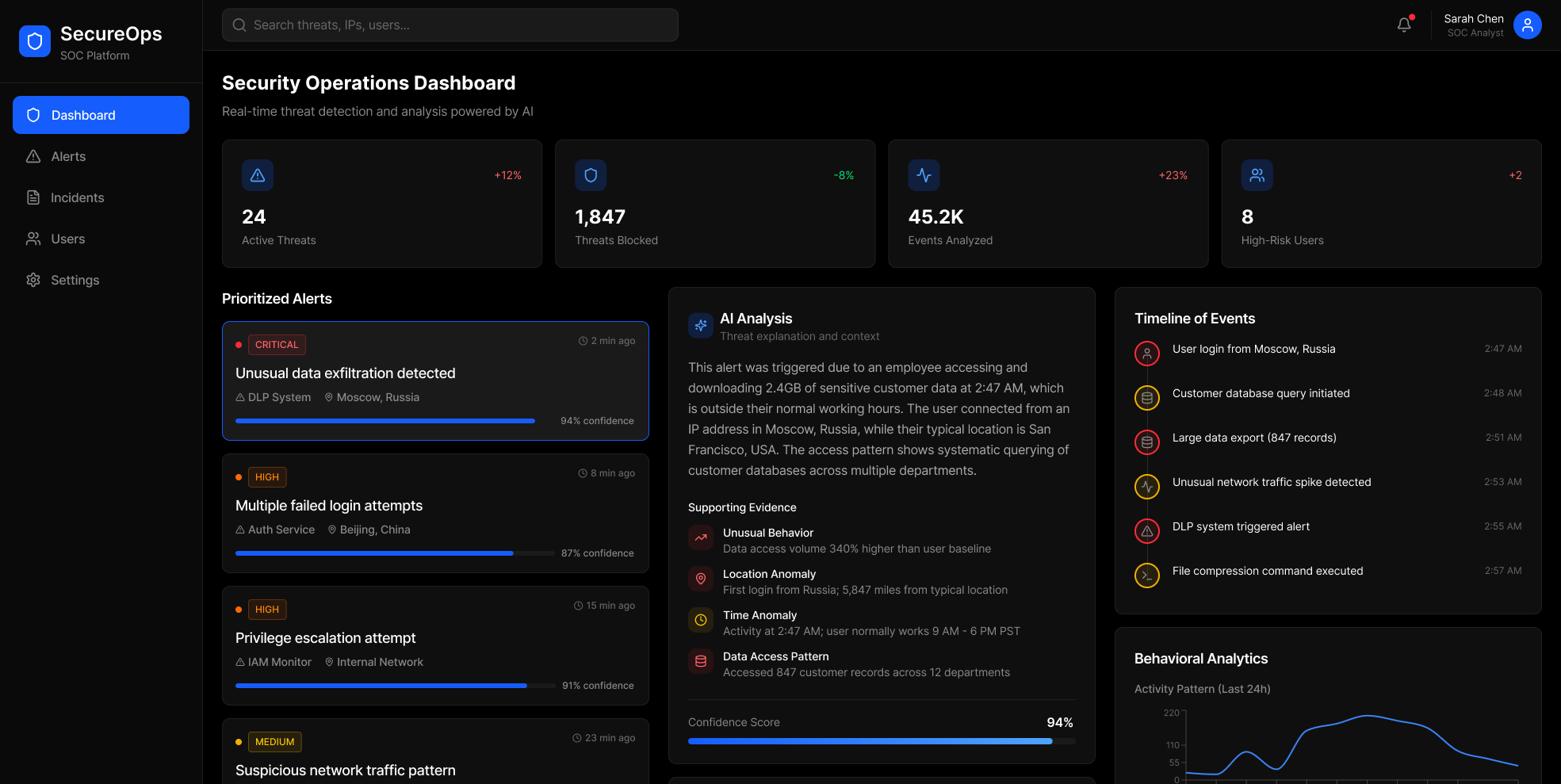

UI Exploration

Between sketching and vibe coding, I put together some concepts for how we could approach the UI.

Heading 1

Heading 2

Heading 3

Heading 4

Heading 5

Heading 6

Lorem ipsum dolor sit amet, consectetur adipiscing elit, sed do eiusmod tempor incididunt ut labore et dolore magna aliqua. Ut enim ad minim veniam, quis nostrud exercitation ullamco laboris nisi ut aliquip ex ea commodo consequat. Duis aute irure dolor in reprehenderit in voluptate velit esse cillum dolore eu fugiat nulla pariatur.

Block quote

Ordered list

- Item 1

- Item 2

- Item 3

Unordered list

- Item A

- Item B

- Item C

Bold text

Emphasis

Superscript

Subscript

Experimenting by placing all necessary information in cards in an organised manner.

.jpg)

As a user clicks on a card, they'll see an AI summary in the middle so as to not overwhelm them.

AI Alert Summary Types

I worked with the content team to explore alert types that users can become accustomed to based on the nature of the alert.

Proactive alerts

Alerts generated before a threat fully occurs, based on predictive patterns and risk signals.

Examples:

- “User behaviour deviating from normal patterns”

- “System showing early signs of compromise”

Prioritised alerts

Alerts automatically ranked and filtered based on risk, context, and potential impact.

Examples:

- Critical threats surfaced at the top

- Low-risk alerts grouped or deprioritised

Contextual alerts

Alerts enriched with relevant information and background context to support understanding.

Examples:

- Related user activity

- Historical behaviour comparisons

- Linked events or incidents

Actionable alerts

Alerts that directly suggest or enable next steps.

Examples:

- “Block IP”

- “Isolate device”

- “Escalate incident”

Adaptive alerts

Alerts that improve over time based on analyst behaviour and feedback.

Examples:

- System learns which alerts are ignored or acted on

- Adjusts prioritisation accordingly

05. Solution

In response to the challenges identified through research and workshop insights, the final solution is an AI-powered cybersecurity platform designed to support Security Operations Center (SOC) analysts in making faster, clearer, and more confident decisions.

Rather than focusing solely on threat detection, the system reframes AI as a decision-support tool, helping analysts prioritise, understand, and respond to threats with reduced cognitive load.

The platform enhances the analyst workflow across four key stages:

- Monitor: Alerts are intelligently prioritised based on risk and relevance, reducing noise and highlighting critical threats

- Investigate: Each alert is enriched with contextual data and clear AI explanations, removing the need for manual data gathering

- Validate: Confidence scores and supporting evidence help analysts quickly assess the severity and legitimacy of threats

- Respond: Recommended actions and one-click responses enable rapid and effective incident management

The final concept transforms cybersecurity systems from passive monitoring tools into active decision-support platforms, empowering analysts to move from detection to action with greater speed, clarity, and confidence.

This solution aligns advanced AI capabilities with real human needs, ensuring technology supports rather than overwhelms the people responsible for security.

An efficient experience in which an analyst has a clearer view of what they need to prioritise and why.

This interface presents a centralised AI-powered cybersecurity dashboard that helps SOC analysts monitor and prioritise threats in real time. It combines high-level metrics, visual insights, and a detailed alert feed to support faster, more informed decision-making and response.

This interface allows analysts to quickly select an alert and view a detailed summary within the same screen, eliminating the need to navigate between multiple tools. By keeping investigation, context, and actions in one place, it enables faster exploration and more efficient decision-making through seamless interaction.

06. Reflection

This project reinforced the importance of designing AI systems around human decision-making rather than technical capability alone. While the technology behind threat detection is highly advanced, the real challenge lies in making that intelligence clear, trustworthy, and actionable for analysts working under pressure. Through research and collaboration, I learned that reducing cognitive load and improving transparency can have a greater impact than adding more features. If I were to take this further, I would explore validating the concepts with real users and refining how AI explanations are presented in high-stakes scenarios.